3D Mesh Generation

3D mesh generation refers to the use of AI models to automatically create three-dimensional polygon meshes — the fundamental representation used in games, film, AR/VR, and industrial design. Where traditional 3D modeling requires skilled artists spending hours or days per asset, generative approaches can produce usable meshes in seconds to minutes from text descriptions, reference images, or rough sketches.

The field has progressed through several architectural approaches. Early methods used voxel-based generation (3D pixel grids) and point clouds, which were computationally tractable but produced low-resolution, blocky results. Implicit neural representations — networks that learn a continuous function mapping 3D coordinates to occupancy or signed distance values — improved quality dramatically but required expensive marching cubes extraction to produce usable meshes.

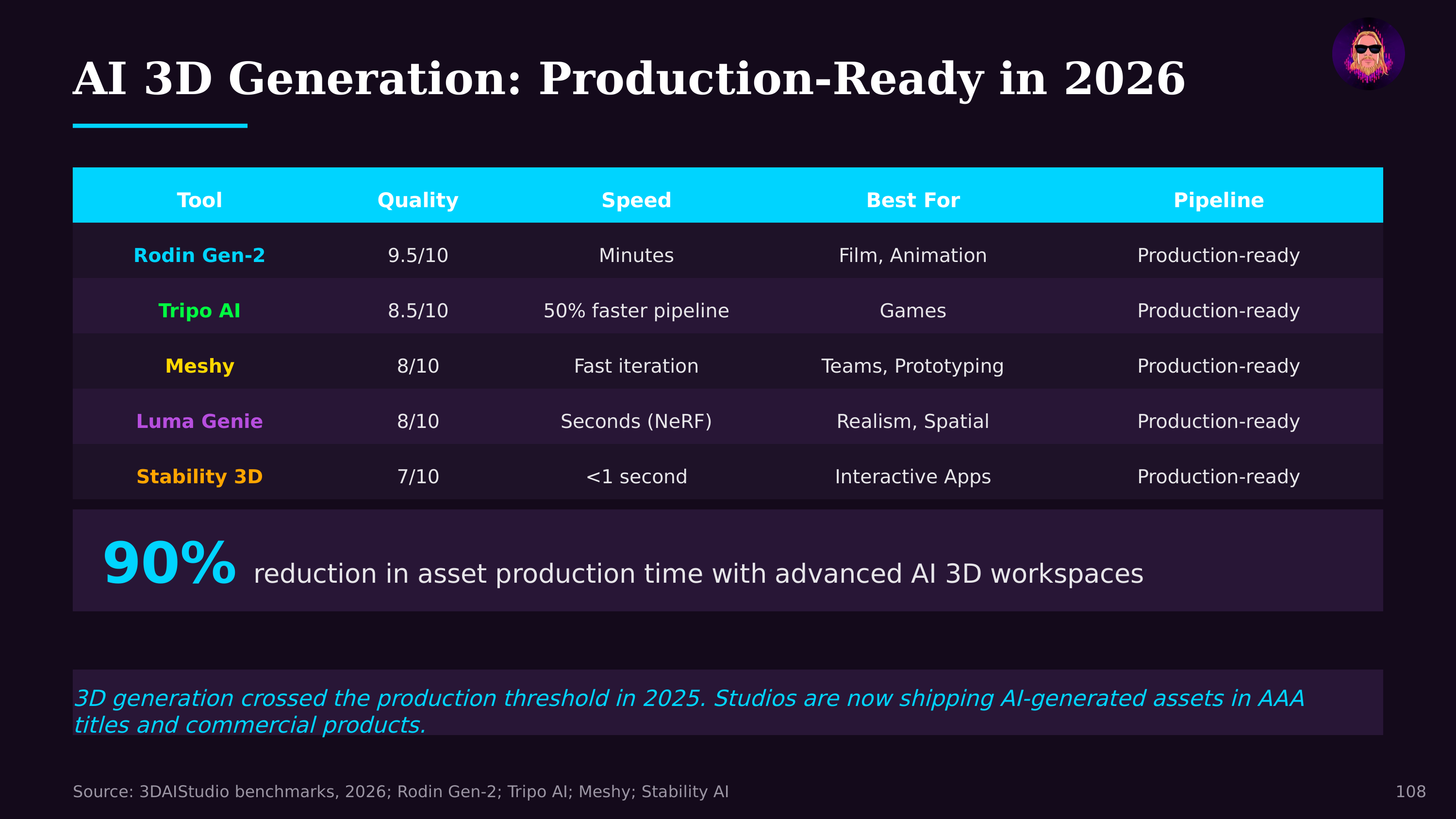

The current generation of models generates meshes more directly. Approaches like Meshy, Tripo, Rodin, and InstantMesh can produce textured 3D models from single images or text prompts. These typically combine diffusion model architectures (adapted from 2D image generation) with 3D-aware decoders. Some generate multi-view images first, then reconstruct geometry; others operate directly in 3D latent spaces.

The Integrated AI Asset Pipeline

By 2026, standalone mesh generation has evolved into a comprehensive AI-assisted 3D asset pipeline. The workflow is no longer just "prompt → mesh" but a multi-stage creative process where AI participates at every step:

Concept to mesh: Text or image prompts generate initial 3D geometry in seconds. Meshy v4 and Tripo 2.0 produce game-ready meshes with clean topology and reasonable poly counts. Luma Genie generates meshes from multi-angle photos. Rodin Gen-2 creates rigged, animatable characters from single reference images.

Automated retopology and UV mapping: AI tools clean up generated meshes automatically — converting dense, irregular geometry into quad-dominant topology suitable for animation, and generating UV layouts for texturing. What previously required hours of tedious manual work now happens in minutes.

AI texturing: Tools like Meshy's texturing pipeline, Adobe's Substance 3D with AI assists, and dedicated platforms apply PBR (physically based rendering) materials contextually — understanding that a sword blade should be metallic while its grip should be leather, without manual material assignment.

Animation and rigging: Auto-rigging services attach skeleton structures to generated meshes and apply motion capture or procedural animation. A character can go from text prompt to animated, game-engine-ready asset in under an hour.

Quality has improved dramatically. The best models produce meshes with clean topology, reasonable polygon counts, and coherent UV-mapped textures — assets that can be imported into game engines or 3D applications with minimal cleanup. The gap between AI-generated and hand-crafted assets is narrowing rapidly for common object categories (furniture, vehicles, characters, props).

The implications for content creation pipelines are significant. Text-to-3D workflows enable rapid prototyping: describe an asset in natural language and get a starting point in seconds. Combined with Gaussian splatting for captured environments and virtual geometry systems like Nanite for rendering, the entire pipeline from concept to real-time visualization is compressing. For the creator economy, AI mesh generation follows the familiar democratization pattern: capabilities that required specialized skills and expensive tools become accessible to anyone with a text prompt — another instance of agentic engineering collapsing the gap between vision and execution.

Further Reading

- Software's Creator Era Has Arrived — Jon Radoff