AI Datacenters

AI datacenters are purpose-built computing facilities designed for the extreme power density, cooling requirements, and networking demands of AI model training and inference at scale. They represent the physical infrastructure layer of the AI revolution — and the largest capital expenditure build-out in technology history. Increasingly, they are being reconceived as AI factories whose primary output is tokens rather than traditional compute services.

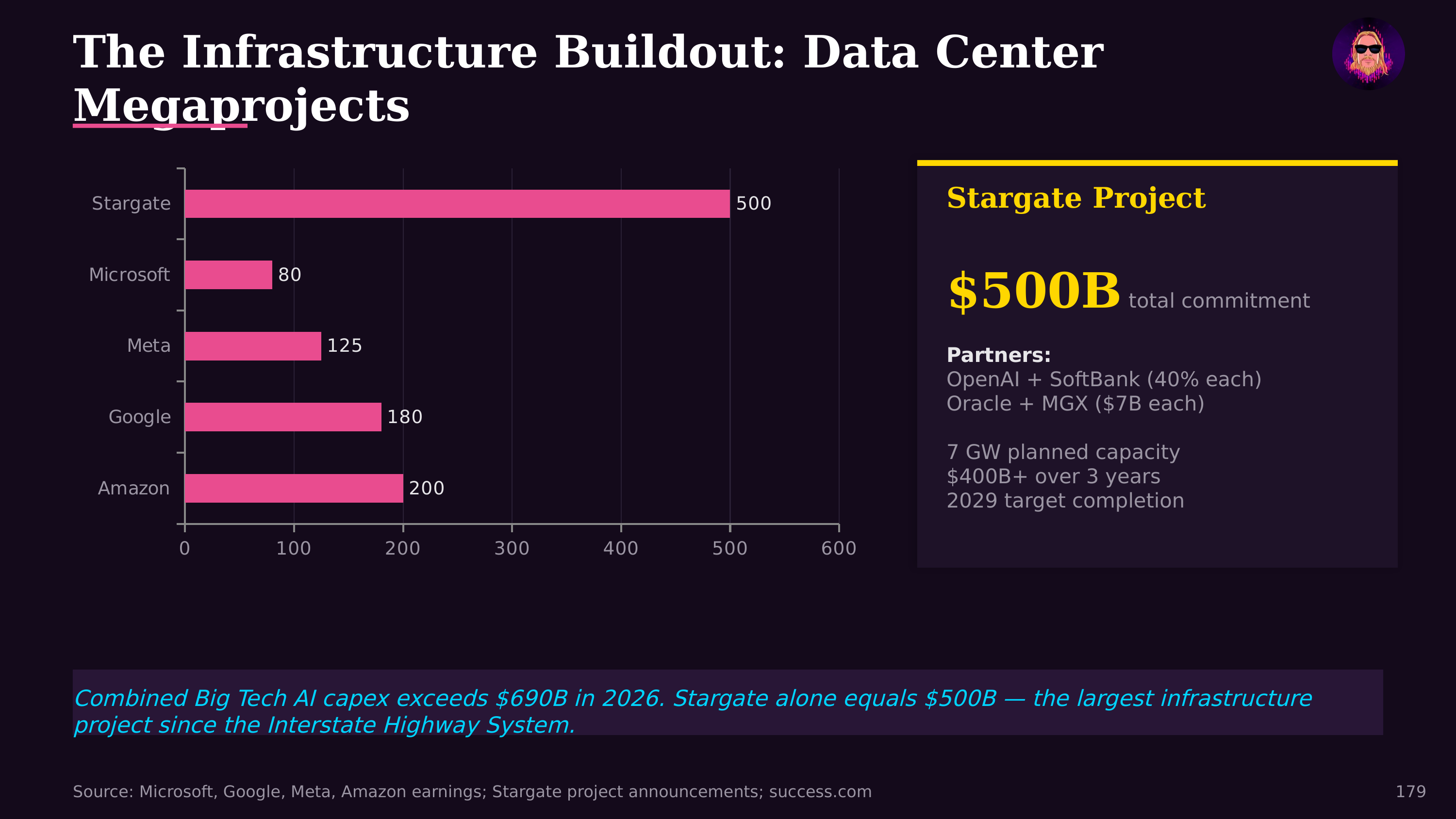

The Scale of Investment

The scale is staggering. Meta's planned 2026 capital expenditure of $135 billion flows primarily into AI infrastructure. Microsoft, Google, Amazon, and Oracle are each spending tens of billions annually on datacenter construction. The total global investment in AI datacenter infrastructure is expected to exceed $500 billion in 2026. NVIDIA alone reports $500 billion in Blackwell/Rubin GPU orders in 2026, projected to exceed $1 trillion by 2027. These are not traditional server farms: they're industrial facilities with power requirements measured in hundreds of megawatts to gigawatts.

The AI Factory Paradigm

Jensen Huang's GTC 2026 keynote reframed the datacenter entirely: future AI datacenters are AI factories whose product is tokens. The key optimization metric shifts from raw compute capacity to token throughput per watt. A 1-GW facility cannot become 2 GW without new power infrastructure, so revenue growth comes from increasing output per watt through better silicon (the Vera Rubin platform claims 35x token throughput over Hopper), better software, and systems-level optimization. NVIDIA's Dynamo OS manages GPU scheduling and token routing, while the DSX Platform provides digital twin blueprints for factory design and operation — from mechanical simulation to power grid optimization.

Physical Requirements

AI workloads impose fundamentally different demands than traditional cloud computing. Power density: A rack of NVIDIA H100 or B200 GPUs draws 40-120 kW, versus 5-15 kW for traditional servers. This concentrated thermal load requires advanced liquid cooling rather than conventional air conditioning. Networking: Training large models requires high-speed interconnects (InfiniBand, NVLink, or custom fabrics) that can move data between thousands of GPUs at terabits per second with microsecond latency. Reliability: A training run that takes weeks and costs millions of dollars in compute cannot tolerate hardware failures, requiring extensive redundancy and checkpoint systems.

Energy and Geography

The energy implications are reshaping the power grid. AI datacenters are driving unprecedented electricity demand growth. Utilities that planned for flat or declining demand are now scrambling to provision gigawatts of new capacity. This has triggered a renaissance in nuclear power interest, with companies signing agreements with nuclear operators and startups pursuing small modular reactors specifically to power AI facilities. The connection to AI energy consumption is becoming a significant public policy and environmental concern.

Geography matters. Datacenters cluster near cheap, abundant power (often hydroelectric or natural gas), fiber-optic backbone intersections, and favorable climate conditions. Northern Virginia, Texas, Iowa, and the Nordic countries have become AI datacenter hotspots.

The cost dynamics connect directly to the AI capability curve. AI inference costs have dropped 92% in three years — from $30 per million tokens to $0.10-2.50 — driven by hardware efficiency gains and the sheer scale of investment amortized across massive inference volumes. The economics of AI datacenters directly determine the accessibility of AI capabilities.

Further Reading

- The State of AI Agents in 2026 — Jon Radoff (AI investment and infrastructure economics)

- Games as Products, Games as Platforms — Jon Radoff (Meta's $135B CapEx)