AI Accelerators

AI accelerators are specialized processors designed to execute the mathematical operations that dominate AI workloads — primarily matrix multiplications, convolutions, and attention computations — at much higher throughput and energy efficiency than general-purpose CPUs. They are the engines that power every foundation model, from training runs consuming megawatts to inference serving billions of queries daily.

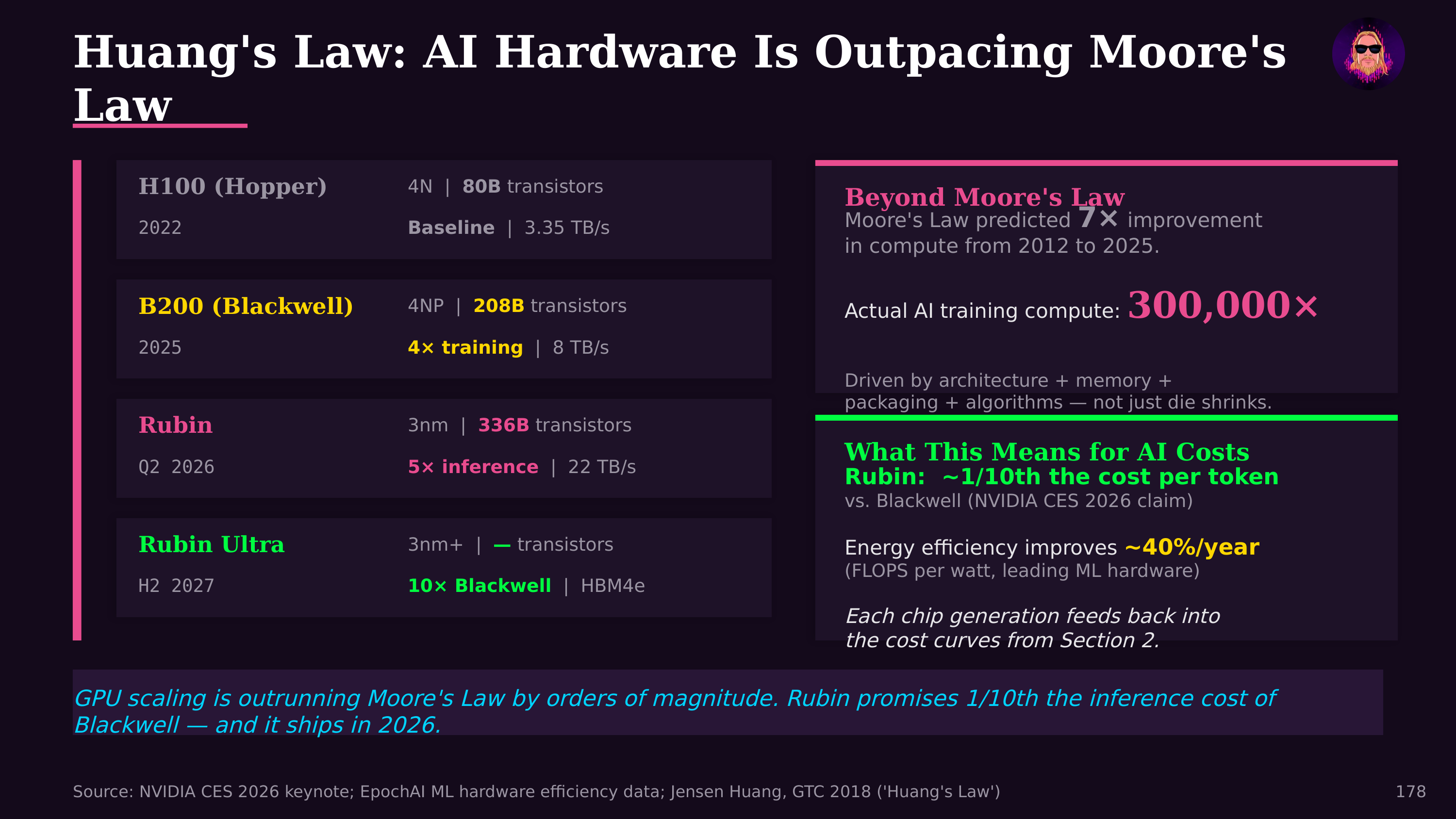

NVIDIA's GPUs dominate the AI accelerator market with roughly 80-90% share in training workloads. The progression from A100 to H100 to B200 (Blackwell) reflects relentless optimization for AI: each generation roughly doubles training throughput while introducing architectural features like transformer engines (hardware-accelerated attention computation), FP8 precision support, and tighter coupling with HBM memory. The GB200 Grace Blackwell Superchip pairs two B200 GPUs with a Grace CPU, offering 20 petaflops of AI compute per unit.

Vera Rubin and the Inference-First Architecture

At GTC 2026, Jensen Huang unveiled Vera Rubin — NVIDIA's next-generation platform after Blackwell — representing a fundamental shift toward inference-first optimization. Where previous generations prioritized raw training throughput, Vera Rubin is architected around the reality that inference now dominates AI compute demand by a factor of 100,000:1 over training. The platform delivers 35x token throughput over Hopper-generation hardware, reflecting the explosive growth in agentic AI workloads where models reason, plan, and generate millions of "thinking tokens" per query.

Vera Rubin is designed as the compute engine for AI factories — datacenters reconceived as token production facilities. The architecture tightly integrates with NVIDIA's Dynamo inference operating system, which handles intelligent batching, speculative decoding, and model routing across heterogeneous chip pools. Vera Rubin Ultra, the high-end variant, targets sovereign AI deployments and hyperscale inference clusters where cost per token is the defining metric. The Blackwell → Vera Rubin transition marks the industry's recognition that the economics of AI have shifted decisively from "how fast can we train" to "how cheaply can we serve."

The competitive landscape is broadening. Google's TPUs (Tensor Processing Units) are custom ASICs optimized for TensorFlow and JAX workloads, powering Google's own AI services and available through Google Cloud. TPU v5p clusters scale to thousands of chips connected by Google's custom ICI (Inter-Chip Interconnect). AMD's Instinct MI300X offers competitive HBM capacity (192 GB) and is gaining adoption as an NVIDIA alternative. Custom silicon from Amazon (Trainium/Inferentia), Microsoft (Maia), and Meta reflects hyperscalers' desire to reduce NVIDIA dependence. Groq's LPU (Language Processing Unit) takes a different approach entirely — a deterministic, compiler-driven architecture that achieves extreme inference speed by eliminating the memory bandwidth bottleneck through massive on-chip SRAM, trading training capability for raw token-generation throughput.

Architecture matters for different workloads. Training demands maximum floating-point throughput and inter-chip bandwidth, favoring large GPUs and TPUs in tightly connected clusters. Inference prioritizes latency, power efficiency, and cost per token, opening opportunities for smaller, more specialized chips — and this is where the market is moving fastest, with Huang projecting $500 billion in Blackwell and Vera Rubin orders and a trajectory toward $1 trillion in AI infrastructure by 2027. Edge inference — running AI on devices rather than in the cloud — favors chips like Apple's Neural Engine, Qualcomm's Hexagon NPU, and Intel's Movidius that achieve useful AI performance within smartphone or laptop power budgets.

The economic significance is hard to overstate. NVIDIA's datacenter revenue exceeded $47 billion in fiscal year 2025, making AI accelerators one of the fastest-growing hardware markets in history. The $211 billion in AI VC funding that Jon Radoff documented (50% of all global VC in 2025) ultimately flows through to accelerator purchases, datacenter construction, and the energy to power them.

Looking ahead, architectural innovation continues across several fronts: photonic computing for energy-efficient matrix multiplication, neuromorphic chips that mimic brain architecture, in-memory computing that eliminates the data movement bottleneck, and quantum processors that could eventually accelerate specific AI computations. The common thread is that AI's computational appetite continues to outpace Moore's Law, driving relentless innovation in specialized hardware.

Further Reading

- The State of AI Agents in 2026 — Jon Radoff