AI Energy Consumption

AI energy consumption encompasses the electricity demands of training and operating AI models — a rapidly growing fraction of global energy use that is reshaping power grid planning, climate commitments, and energy infrastructure investment worldwide.

The scale is unprecedented in computing history. Training a single frontier model like GPT-4 or Claude consumed an estimated 50-100 GWh of electricity — equivalent to powering 5,000-10,000 US homes for a year. But training is a one-time cost; inference (serving the model to users) runs continuously and at massive scale. By early 2026, AI inference is estimated to consume 3-5% of US electricity production, with projections suggesting 10-15% by 2030 if current growth trajectories continue.

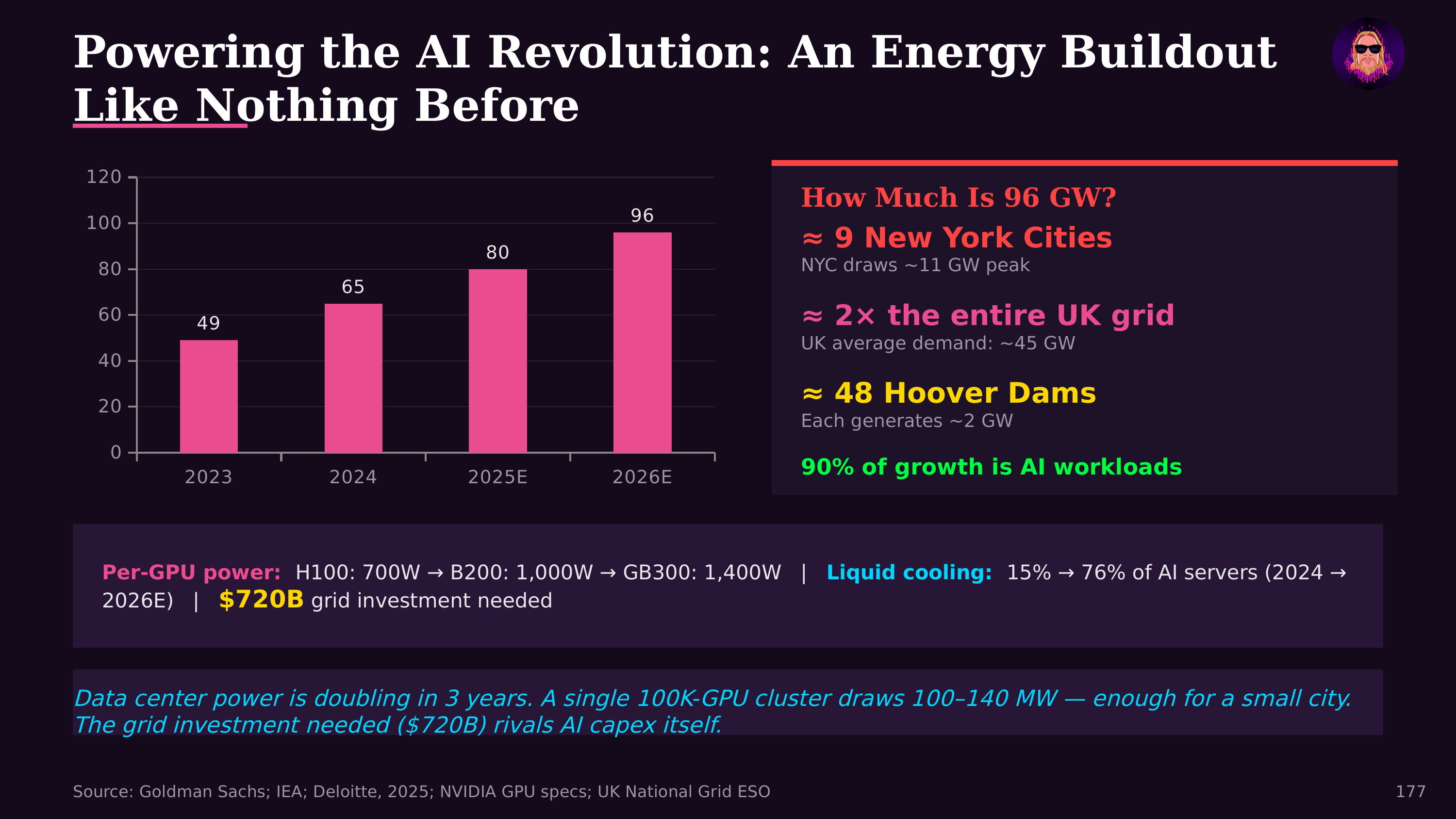

AI datacenter electricity demand is growing at a rate that has caught utilities off-guard. After decades of flat or declining growth, US electricity demand is projected to increase 15-20% over the next decade, driven primarily by AI datacenters. This has created a scramble for power: datacenter operators are signing long-term power purchase agreements, utilities are delaying coal plant retirements, and grid operators are raising concerns about reliability.

The nuclear renaissance is directly tied to AI energy demands. Microsoft signed a 20-year agreement to restart a reactor at Three Mile Island specifically to power AI operations. Amazon, Google, and others have signed deals with nuclear operators or invested in small modular reactor (SMR) startups. Nuclear's appeal for AI is its combination of high capacity factor (runs 90%+ of the time), zero direct carbon emissions, and the ability to provide dedicated baseload power at datacenter scale.

Efficiency improvements partially offset growing demand. AI inference costs have dropped 92% in three years — meaning the energy per unit of useful AI work is declining rapidly. More efficient architectures (mixture of experts), hardware improvements (new accelerator generations), model quantization, and knowledge distillation all reduce energy per inference. But Jevons' paradox applies: as AI becomes cheaper, usage expands, potentially overwhelming efficiency gains.

Liquid cooling technology reduces the energy overhead of cooling AI hardware, improving datacenter PUE (Power Usage Effectiveness) from 1.3-1.6x to near 1.05x. This means more of each megawatt goes to useful computation rather than cooling, but doesn't reduce the total compute demand.

The energy debate intersects with AI's broader economic value proposition. If AI delivers the productivity gains its proponents claim — the 6x outlier performance that Jon Radoff documented — the energy investment may produce enormous economic returns. But the environmental cost, grid strain, and equity implications of concentrating energy consumption in AI infrastructure deserve serious consideration alongside the capability gains.

Further Reading

- The State of AI Agents in 2026 — Jon Radoff (AI cost curves and investment)