Open-Weight Models

Open-weight models are AI systems whose trained neural network parameters (weights) are released publicly, allowing anyone to download, run, and often fine-tune them—while the training data, training code, or commercial use rights may remain restricted. This distinction from fully open-source AI matters more than most people realize.

The term gained precision as the AI industry grappled with what "open" actually means. Meta's Llama models, for instance, release weights freely but impose commercial licensing restrictions above certain user thresholds. DeepSeek releases weights under permissive licenses but doesn't share training data or the full training pipeline. Mistral and Qwen follow similar patterns. The Open Source Initiative has argued that models without full training data transparency don't qualify as truly "open source" under traditional software definitions—hence the more precise term "open-weight."

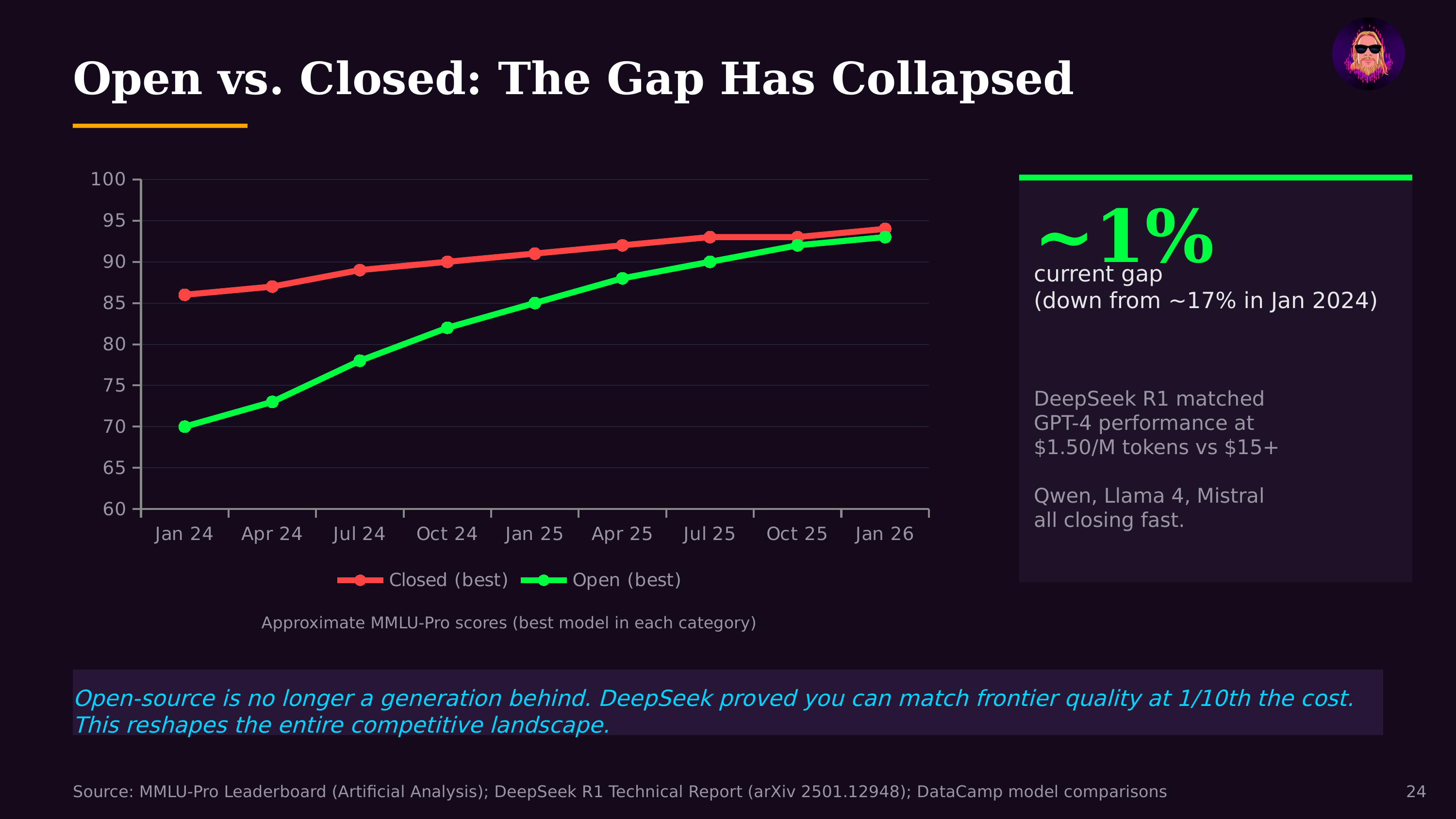

What makes open-weight models transformative is their economic impact. DeepSeek's models match frontier quality at $1.50 per million tokens, forcing dramatic price competition across the industry. The broader open-weight ecosystem—Llama, Mistral, Qwen, Gemma, Falcon—has driven AI inference costs down 92% in three years. This is the "DeepSeek effect": when open-weight models approach frontier performance, they create a price ceiling that even closed-model providers can't ignore.

For agentic engineering and the Creator Era, open-weight models are foundational infrastructure. They can be fine-tuned for specific domains, run on-premises for privacy-sensitive applications, quantized for edge deployment, and customized without vendor lock-in. This is what enables solo founders to build AI-powered products without depending on (or paying for) API access to frontier closed models.